Long-time no see!

Hey all, it's been a while since I've made a post, I figured it was time I got the gears spinning again.

First off, allow me to explain;

Life has been rather hectic lately, my partner decided to hop jobs a few times limiting my budget, and I've been pouring time into my other hobbies as of late, so labbing and tech have been on the backburner for a while. But that doesn't mean I didn't do things here and there that weren't notable!

New Server "VMhost3"

I found that attempting to run high-disk access applications like game servers and file servers off of a 1Gbps NFS connection was absolutely silly and performance was absolutely abysmal.

As of writing this post, I currently have 5 VMs running over 1 line which in simple-terms, oversaturates the line considerably. This works well for small VMs like DCs and single-user VMs like my jump-box, but performs poorly on high-intensive read-write applications.

As a result, I decided it was time for an appliance that has a good number of drive bays, a solid clock speed CPU, uses DDR4, and of course has enterprise features like OOB management, multi-NIC and a RAID card.

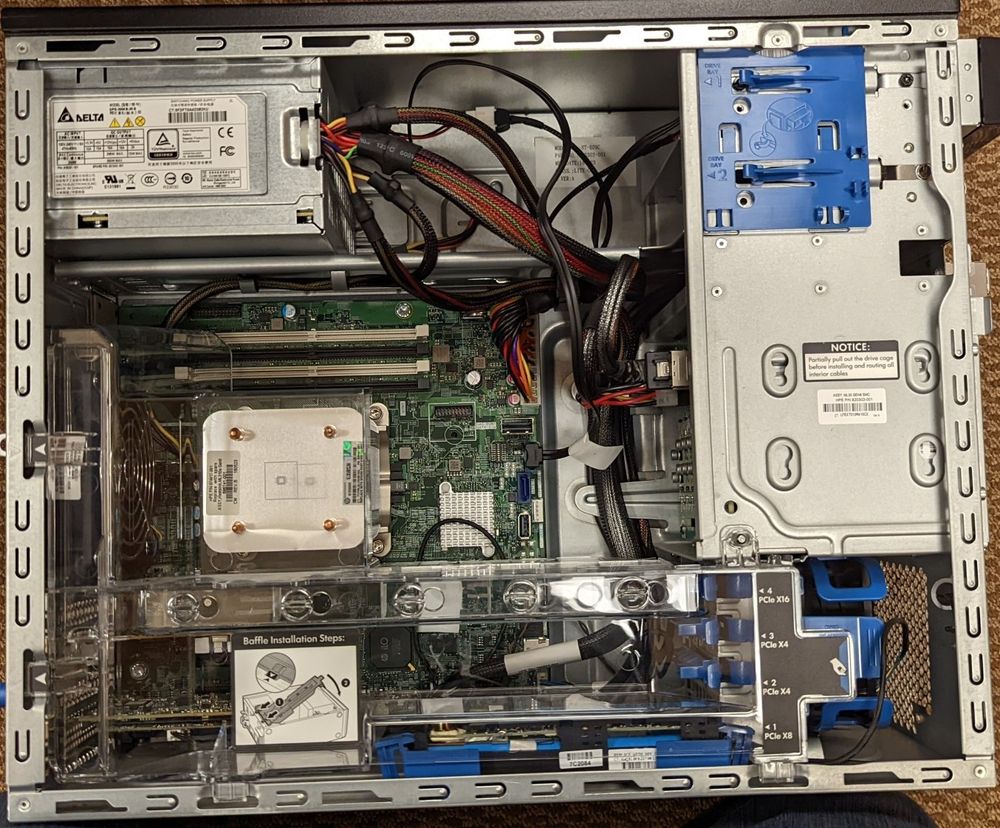

After investigating on Reddit and crawling my usual go-to sites, I stumbled upon this gem of a "workstation" tower:

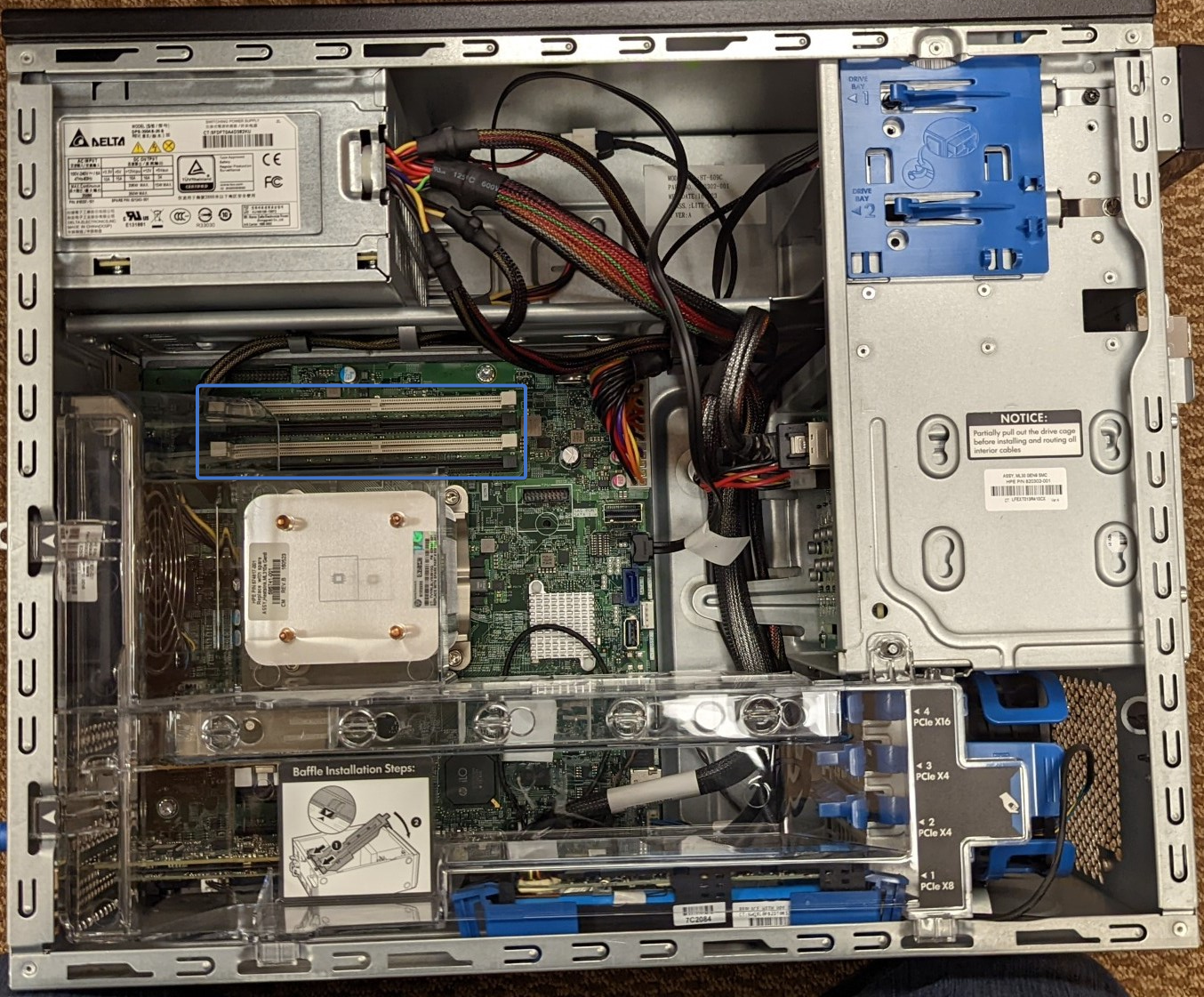

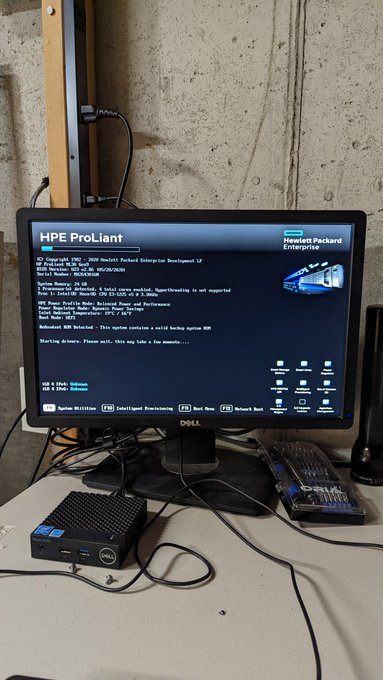

The HPE ML30 G9 is a scalable workstation that can take Skylake-era Xeon CPUs, 4 DDR4 ECC DIMM slots, has 8 2.5" hot-swappable drive bays, dual NICs, iLO 4, and comes default with a HPE Smart Array P440 Controller for RAID operations.

I got mine from a server refurbishing company, and it has the following specs:

- Intel Xeon E3-1225v5

- 24GB of DDR4 ECC (UDIMM, 1x16, 2x4)

- HPE Smart Array P440 Controller

- HP 331FLR (Quad-NIC, I personally removed this as I don't need it)

- (2x) Intel SSD DC S3500 480GB Drives (VM Storage, ZFS RaidZ1)

- (1x) Crucial MX500 250GB SSD (Proxmox Install Disk)

There was a small hiccup when I received it from them, they somehow forgot to actually build the server and there wasn't any RAM or any drives installed. Although, they were nice about it and shipped out the missing parts next-day.

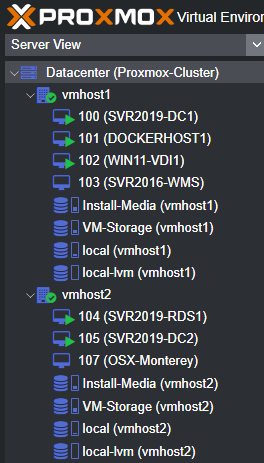

After I got it up and running, I immediately updated the BIOS, Firmware(s), and etc. before imaging it with Proxmox as a hypervisor and joining it to my existing cluster.

After all of that, I was able to run some "tests" deploying a Minecraft server, and a DayZ server on Windows Server 2019. Without a doubt, this is a MAJOR increase in performance compared to my previous attempt.

I took this opportunity to learn LXC containers as well, LXC containers have a lot less overhead compared to traditional VMs. They shed overhead by running at the kernel level vs. as a separate entity while being as-secure.

Proxmox uses LXC templates to build containers, making it as simple as downloading a template for Ubuntu Server 22.04, deploying it and configuring as needed. The install process is ALOT faster than deploying it via traditional methods, and the container details are easily changeable via the GUI.

I plan to add more RAM and storage to this machine as needed, and may possibly explore the idea of upgrading the CPU to something with a higher core-count or hyperthreading.

Multi-site Networking

My parents moved again recently, and this time it was further away than before. Personally, I wasn't fond of the distance, but what can I do if it's what they want. In response, I wanted to ensure I could assist remotely whenever possible, so I implemented some additional hardware.

For routing, firewall, and wireless networking, I chose Ubiquiti's AiO Solution, The UniFi Dream Router. It packs a PoE switch, WiFi 6, firewall, IPS/IDS, router and controller into one small unit less than the size of a gallon of milk.

As for switching, I deployed a UniFi Switch 8 and a UniFi Switch Flex Mini in centralized locations throughout the house.

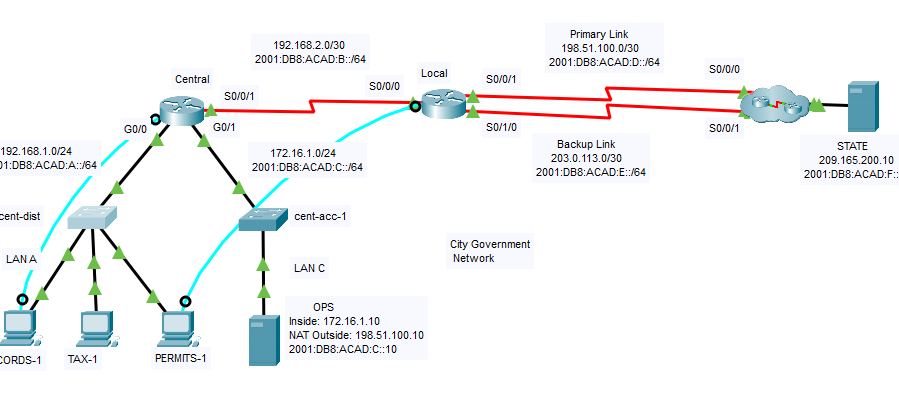

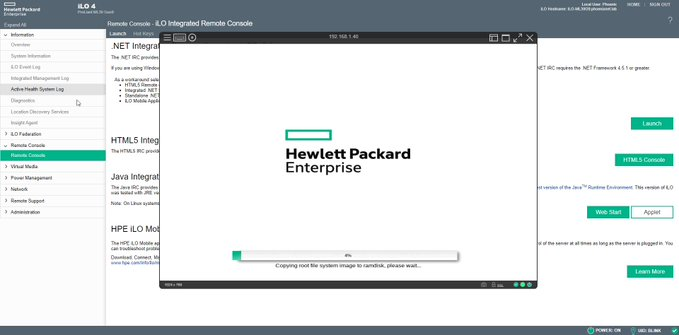

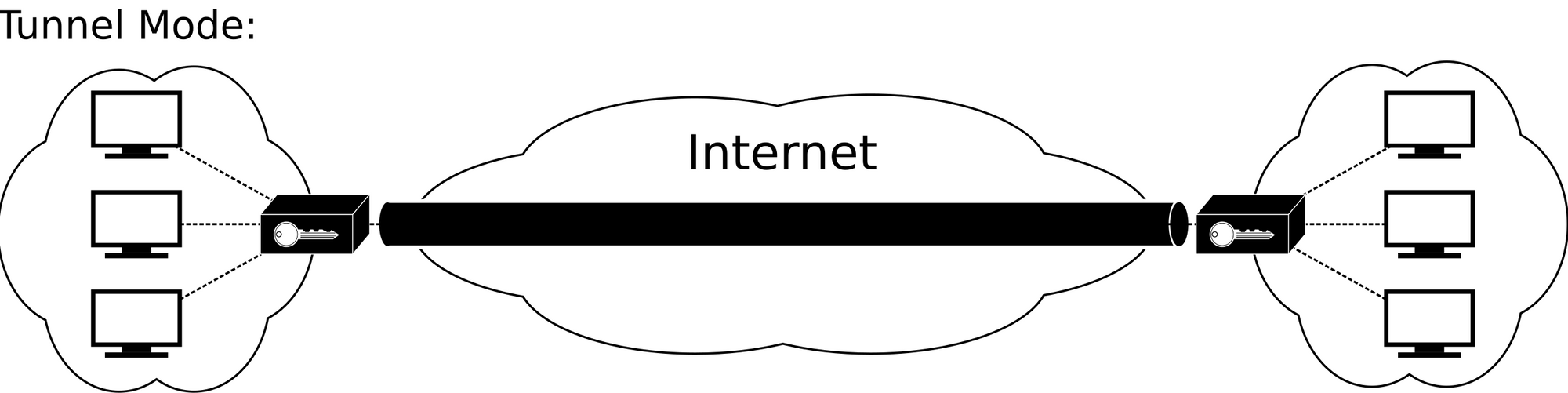

Now, for the icing on the cake, I setup an IPSec tunnel between the UDR and my UDMP allowing multi-site communications!

For those unfamiliar, an IPSec tunnel is a link between two routers that allows network traffic to traverse over the internet as-if it were directly connected.

IPSec tunnels allow traffic from my network (.1.0/24) to communicate with their network (.20.0/24) and thus allow me to remotely administrate and manage network devices.

I also used the IPSec tunnel to deploy one of my existing UniFi Talk phones remotely. I deployed my UniFi Talk Flex in my dad's office so that he can dial-out to any number, but also call internally to my desk phone! No more wasting money on calling my desk phone!

Shockingly, this works really well over such a long distance, I was rather surprised to see it "just work" and for calls to sound so crystal clear.

Like-Son-Like-Father

A while ago, my dad was inquiring about NAS appliances with me. He was looking for something that could store his music library, as well as documents centrally so that he and mom could access them from any device in the house.

I was onboard with the idea 100%, and recommended the Synology DS218play / Synology DS220J. Ideally, he'd only really need 2 4TB drives, maybe 6TB depending on how large his library is.

My dad switched over to a laptop recently, and in the process "retired" his old desktop I built him while I was in high school. I discussed with him the idea of taking some spare hardware I had and mashing it into that desktop and he thought it was a solid compromise!

So, for father's day, I ended up building him a NAS, here's the specs:

- Intel Core i5 7600k

- 8GB of DDR4 RAM

- Kensington 250GB SSD (TrueNAS Install)

- (3x) Seagate Constellation 2TB SAS drives

- Dell PERC H700 RAID card

- NVIDIA GT 1030 2GB

It's a pretty humble build, with plenty of room to grow if he wants to expand in the future. I don't think he'll be spinning up any VMs or hosting anything more than a Plex library, but it's certainly an option with this configuration.

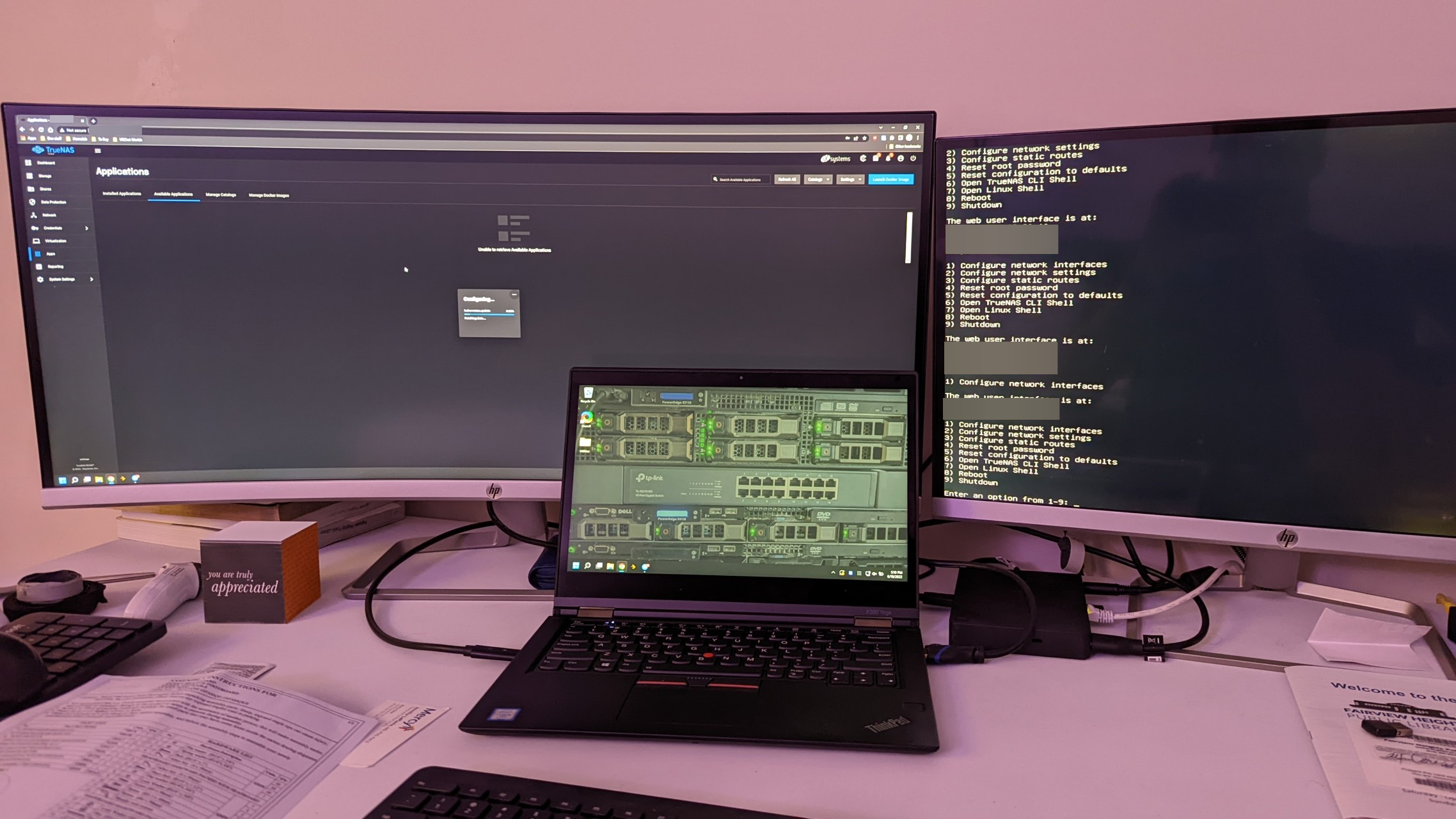

I decided on TrueNAS as the host-OS as it's 100% free and opensource, and I have some background using it in the past. Although, this time I went with TrueNAS Scale as it uses Debian over FreeBSD which makes it a lot less daunting.

Ideally in the future, I'd like to connect his TrueNAS server to my domain network for authentication, but for security reasons I'd prefer to deploy a 3rd DC on-prem for it to authenticate to vs. authenticating over the IPSec tunnel. This also plays into DNS and attempting to push it over the tunnel. I'd rather deploy the DNS role with the DC and setup a PiHole LXC to handle ad-blocking.

Lots of traffic over a tiny pipe isn't ideal, QoS is an option for VOIP traffic, but I'd like to avoid that if I can.

But all of those things are just ideas of things to do in the future, I like to give myself room to grow and not do everything at once.. What's the fun in doing everything and having nothing else left to do?

That about wraps it up for now, I always have a passion for doing these projects, and I'm glad that I have a platform share all of my experiences on.

Thank you for reading, and until next time!